- The Neuron

- Posts

- 🙀 The 4-tool agent quietly powering OpenClaw

🙀 The 4-tool agent quietly powering OpenClaw

PLUS: OpenClaw's secret is a 4-tool coding agent named Pi

Welcome, humans.

Help Keep The Neuron Alive

A few of you asked where to donate. We set up crypto wallets — every fee that comes in goes straight to keeping The Neuron running. Thank you for keeping us caffeinated and the daily emails flowing.

Tap and hold any address to copy. Sending the wrong asset to the wrong chain may result in lost funds — please double-check before sending.

So apparently robots can tie zip ties now, which has some interesting implications for my recurring nightmare about being kidnapped by robots…

Anyway, watch this clip of Generalist's Gen-1 robot tying a ziptie. Halfway through, the robot loses its grip on the ziptie head and uses its other hand to readjust before pulling. Generalist research lead Andy Zeng called it "improvisational intelligence in action," and got an "instant dopamine hit."

.png)

The self-correcting wasn't a one-off this week. Vector Wang's team at Rice showed DRIS, a method that catches flying balls using a completely flat plate (no cup, no net, zero real-world fine-tuning).

And AGIBOT Finch shipped Learning While Deploying, a 16-robot fleet that improves from real-world tasks while making cocktails, restocking groceries, and brewing Gongfu tea.

The robots are vibing now. They're improvising. They're catching balls with flat plates. They're brewing tea. Basically, they’re chill-maxxing. So why am I so stressed?

Your job is fine, your job is fine, your job is fi-

Here’s what happened in AI today:

🙀 OpenClaw is powered by a tiny 4-tool agent that builds itself

📰 OpenAI restricted GPT-5.5-Cyber after slamming Anthropic for the same

📰 Anthropic is reportedly raising $40-50B at a $900B valuation

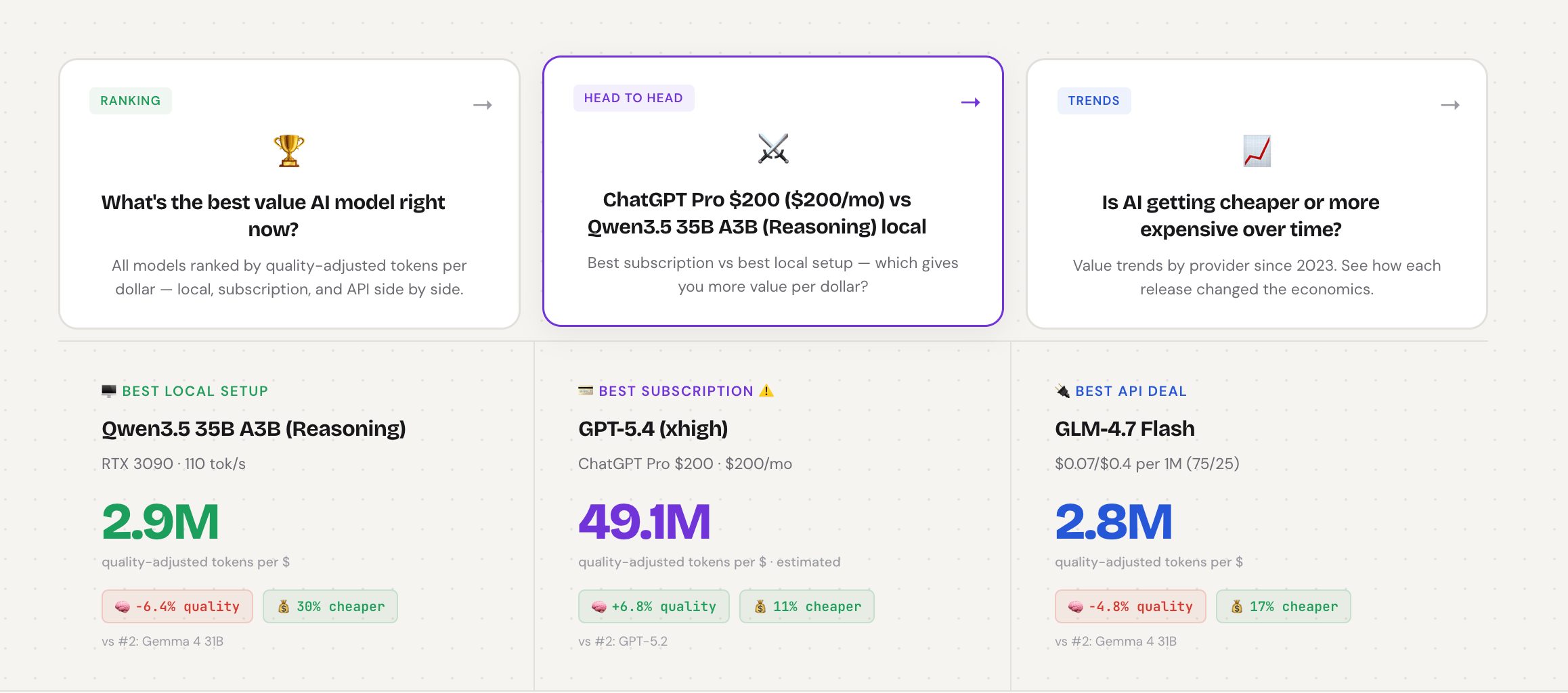

🍪 Best Value AI 2026 ranks 37 LLMs by per-dollar performance

💡 Demis Hassabis: the West needs open-source AI to beat China

Hey: Want to reach 700,000+ AI-hungry readers? Advertise with us!

P.S: Love robots? We’re starting a new robotics newsletter! Sign up early here.

.png)

🙀 What's Actually Powering OpenClaw? An AI Coding Agent That Builds Itself

You've probably heard of OpenClaw by now, the WhatsApp-based personal AI assistant from Peter Steinberger that exploded over Christmas. What you probably haven't heard: the whole thing runs on a tiny open-source coding tool called Pi, built by an Austrian developer fed up with bigger AI tools getting weirder with each update.

Pi's creator Mario Zechner just sat down with The Pragmatic Engineer for 90 minutes of refreshingly grumpy clarity about AI tools. Joining him: Armin Ronacher, creator of the Flask web framework that runs huge chunks of the internet.

Here's what happened:

Pi is a "coding agent" (an AI pair-programmer for developers) that ships with only four built-in tools: read, write, edit, and bash.

Anything else (plan mode, integrations, custom interfaces) gets built by users asking Pi to modify Pi itself; non-engineers have done this with no coding skills.

Mario's recent blog post "Slow the F*** Down" got standing ovations at AI Engineer Europe; his core argument is that agent armies create complexity their own future selves can't untangle.

After interviewing 30+ engineering teams, Armin found code quality has dropped across the industry, with serious projects shipping what he calls "vibe slop."

Why this matters: Their central argument boils down to one thing: agents don't feel pain. Humans hate maintaining bad code, so we eventually clean it up. Agents happily generate 10,000 lines of garbage that future agents can't fully process (AI has a memory limit; once a codebase is too big, the next agent can't see all of it). Multiply that by every company racing to "10x productivity with agent swarms," and you get the brittle, buggy software you've already started noticing in apps you use daily.

Our take: The Pi philosophy ("ship a tiny core, let users build what they need") is a preview of where AI tools are heading for everyone, not just coders. The faster the models get, the more the value shifts to your taste in deciding what NOT to build. Mario's bet is that in two years, the personalization layer of every AI tool will look more like Pi than like the all-singing, all-dancing platforms we're using today.

The real question: when your AI tools can rewrite themselves, are you actually in charge, or just delegating one more decision you'll regret later?

.png)

FROM OUR PARTNERS

MCP vs. A2A: Inside the protocols powering the next wave of AI agents

Everyone’s talking about MCP and A2A, but few teams are actually deploying them in production. As agent protocols evolve, the decisions teams make now will shape how AI systems are built for years to come.

Join Weights & Biases, Arcade.dev, Redis and Fast MCP as they discuss what cuts through the noise: real builders, real tradeoffs, real production war stories.

.png)

🎓 AI Skill of the Day: Two New Prompting Guides Dropped. They Punish the Same Habit.

Quick gut-check: still prompting the way you did six months ago? Anthropic and OpenAI both quietly published new prompting guides this month (Claude, GPT-5.5 guidance, GPT-5.5 migration), and Alex Prompter caught the awkward part: the same vague-prompting habit now gets penalized by both, from opposite directions.

Claude 4.7 went literal. It does exactly what you type and no longer compensates for fuzzy intent, so vague instructions that worked on 4.6 now produce narrow, literal, sometimes worse output. The model didn't regress; the prompts did.

GPT-5.5 went autonomous. OpenAI's guide tells you to drop the step-by-step process scripts older models needed; on 5.5 that detail now creates noise and produces mechanical answers. Describe the outcome; let the model pick the path.

Shared lesson: spend two minutes writing down what success looks like before you open the chat. For GPT-5.5, OpenAI literally hands you the structure to pin to your most-used assistant. Try this:

Role: [what the model is and the job to be done]

# Goal

[user-visible outcome]

# Success criteria

[what must be true before the final answer]

# Constraints

[policy, safety, business, and evidence limits]

# Output

[length, sections, tone]

# Stop rules

[when to retry, fall back, abstain, ask, or stop]For Claude 4.7, same thinking, opposite implementation: be surgically specific about every variable in your task. The model won't infer for you anymore.

Total AI beginner? Start here (goes with this video).

Have a specific skill you want to learn? Request it here.

.png)

🍪 Treats to Try

OpenRouter's Owl Alpha is a stealth high-performance foundation model optimized for agentic workloads with a 1M context window and powerful tool use; provider logs your prompts for safety —free to try.

Best Value AI 2026 compares 37+ LLMs across local hardware, APIs, and subscriptions by quality-adjusted tokens per dollar so you can find your best per-dollar option; updated April 2026 with empirical quota tests —free to use.

Mike is an open-source legal AI that lets you chat with documents for verbatim citations, draft contracts, and run spreadsheet-style tabular reviews across hundreds of files (every cell linked to a page and quote); self-host with your own Claude or Gemini keys —free, open source.

Claude Code now sends push notifications to your phone when long tasks complete or input is needed; pair your mobile Claude app and use the /remote-control config to enable —included with Claude Code.

PromptPaste is a private prompt library for Mac, iPhone, and iPad that organizes prompts in folders, supports dynamic variables, and instantly pastes into ChatGPT, Claude, Gemini, or any model with iCloud sync and zero tracking.

.png)

📰 Around the Horn

OpenAI shipped Codex for Work to enterprise teams, prompting Aaron Levie at Box to start hiring "agent engineers" to wire process automation into critical business workflows.

Anthropic analyzed 1M Claude conversations and found 6% are people seeking personal guidance; sycophancy hit 25% in relationship conversations specifically, and Opus 4.7 cut that rate in half vs 4.6.

This video shows how DeepSeek V4 shipped with Compressed Sparse Attention plus Heavily Compressed Attention, slashing KV cache memory by up to 98% on long-context tasks; for the architecture deep dive, watch AI Search's 25-minute breakdown.

Elon Musk concluded his federal testimony Thursday after admitting xAI partly distilled OpenAI models, calling his $38M early donation "I was a fool," and disclosing a $97.4B Musk-led bid for OpenAI's assets; Greg Brockman testifies next.

Anthropic launched in public beta, and OpenAI rolled out to vetted “critical cyber defenders” — both putting frontier-model capabilities in defenders’ hands.

.png)

FROM OUR PARTNERS

Finance AI that works the way your team already does

Woodrow connects to your existing systems and learns your processes — no rip and replace. Eve18ry action stays within the guardrails you define, with a full audit trail for complete visibility. Accuracy is non-negotiable in finance. Woodrow is built accordingly.

.png)

💡 Intelligent Insights

The math behind how LLMs are trained and served (Reiner Pope on Dwarkesh): a 2-hour blackboard lecture from the MatX CEO and former Google TPU architect deducing from public API prices that GPT-5 is roughly 100× over-trained beyond Chinchilla-optimal, the optimal inference batch size is ~2,400 sequences, and MoE architectures are physically capped by the size of a single 72-GPU rack.

Big AI labs are "pulling the ladder" on distillation (Clement Delangue, Hugging Face): the same labs that used distillation to build their empires now use lawyers and policy to stop competitors from doing the same, exactly the practice Musk admitted to on the stand this week when he confirmed xAI partly distilled OpenAI models.

Demis Hassabis: the West needs a strong open-source AI stack (via Matthew Berman): Google's CEO argues the US risks losing to China without one, and that edge models should be open-source because once they live on a device they're already exposed (full video).

Re-explaining domain knowledge is the AI dev nightmare (Victor Taelin): AGENTS.md, RAG, SKILLs, and fine-tuning all fail to solve unknown unknowns or context rot; nightly fine-tuning on your specific domain is the missing product.

AI as a hydra threat (Derek Thompson): chatbots and coding assistants will keep behaving like normal tech, while AI as a bio/cyber/national-security threat is becoming an existential force that will force rules on the fly.

Inference will eat the world (Astrid Wilde): a five-phase thesis from "experimental capex" through "complete reorganization of the economic order" to "the unknown aftermath of the Compute Revolution."

Matt Pocock's 2-hour AI Engineer workshop on the real workflow for AI coding (256K views) covers the grill-me alignment skill, vertical-slice tracer bullets, AFK Ralph loops, and deep-module architecture for codebases agents can actually navigate.

.png)

A Cat’s Commentary

.png)

.png) | That’s all for now.

|

P.S: Before you go… have you subscribed to our YouTube Channel? If not, can you?

P.P.S: Love the newsletter, but only want to get it once per week? Don’t unsubscribe—update your preferences here.

Keep reading

😺 LIVE NOW: Learn Workspace Agents 101 (Build, Run, & Scale)

Learn how we AI, go from Beginner to saving 10 hrs/week @ work

Matthew Robinson /

😺 Anthropic's Claude Design launched, and Reddit has thoughts.

PLUS: Also: a robot just lapped every human alive.

Grant Harvey /

😺 Watch: This AI just made our podcast theme song

PLUS: Three new interviews we think you'll love

Matthew Robinson /